In the 18 years that Maxar has been operating Earth imaging satellites, we have collected over 100 PB of imagery about our changing planet. For most of that time, this vast amount of data was stored in a series of tape libraries running in our data centers. But in 2015, Maxar kicked off a project to modernize: moving the entire imagery archive to the cloud. We aimed to optimize our IT costs while serving our customers better by putting our archive where they were already working. In collaboration with Amazon Web Services (AWS), we used AWS Snowmobile, essentially a datacenter in a ruggedized semi-trailer truck, to transfer our archive to Amazon’s cloud.Once our data was in the cloud, we faced a new challenge: managing our storage costs. In the data storage world, there is a fundamental trade-off between how quickly data can be accessed and how much it will cost to store. More expensive systems offer faster data access, while cheaper systems mean slower data access. These costs can be difficult to calculate in a traditional data center environment, but in the cloud they are crystal clear: Amazon Glacier, which offers data retrieval times ranging from a few minutes to several hours, is just one-fifth the cost of Amazon S3, which offers instantaneous data access.The general strategy to optimize AWS storage costs is to put as much data in Amazon Glacier as possible. But that doesn’t work for data that is frequently accessed. It’s necessary to understand data access patterns to decide what data to store in Amazon Glacier and what data belongs in S3. The sheer size of our imagery archive makes this analysis challenging for humans to do, but we tried it. In the animation below, the blue dots represent what humans decided to cache (almost the whole world) and the orange dots represent what our customers requested access to over a three month period. We were missing the mark by a long shot.

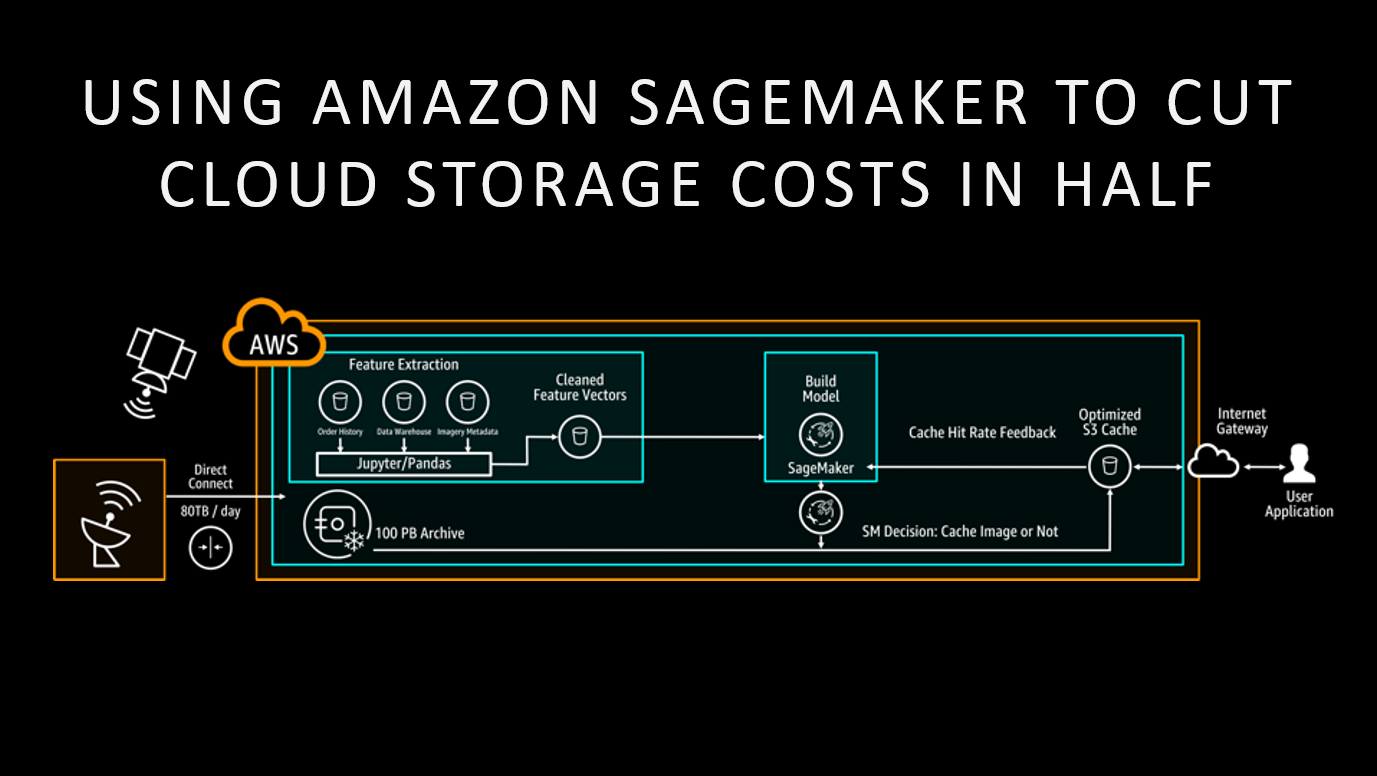

We were excited to get a chance to participate in the beta testing cycle for a new service: Amazon SageMaker, which is a complete machine learning platform as a fully-managed service. Announced at AWS re:Invent 2017, Amazon SageMaker unlocks the power of machine learning for a wider audience – in our case, infrastructure engineers with no prior experience in data science. It gives anyone the ability to quickly build, train and deploy machine learning models at any scale.For a team new to machine learning, the hardest part is knowing where to start. Luckily, we had help from the Amazon ML Solutions Lab, which partnered us with machine learning experts from Amazon. After studying our data storage problem, they worked with us to cast it as a machine learning algorithm. This stage of the machine learning process, from problem formulation to model selection, can be tricky for beginners to get right since it requires experience with a variety of machine learning models.Once we had the model representation in hand, our next task was “feature engineering” – extracting meaningful inputs from the raw data to send to the machine learning algorithm. In Maxar’s case, we had two datasets: an inventory of all images in our archive and our commercial order history. We wanted the machine learning algorithm to find the characteristics of an image which make it more likely to be ordered, and should be placed in S3 for rapid access. We used Amazon SageMaker Notebooks to conduct this analysis, and ultimately built a model which we predict will reduce cloud storage costs for our imagery archive by 50%.

Machine learning is currently one of the most exciting trends in the tech industry, but its use has largely been confined to data scientists working on problems such as computer vision. Indeed, Maxar has a number of data scientists who use machine learning to analyze satellite imagery to gain actionable business insights. With Amazon SageMaker, a broader group of technologists can leverage machine learning techniques to solve a wider range of problems, such as optimizing cloud storage costs.

Dr. Walter Scott, CTO of Maxar Technologies and founder of DigitalGlobe, describes Maxar's use of Amazon SageMaker in detail during Amazon's CTO Werner Vogels’s keynote speech at AWS re:Invent 2017. Watch it here.