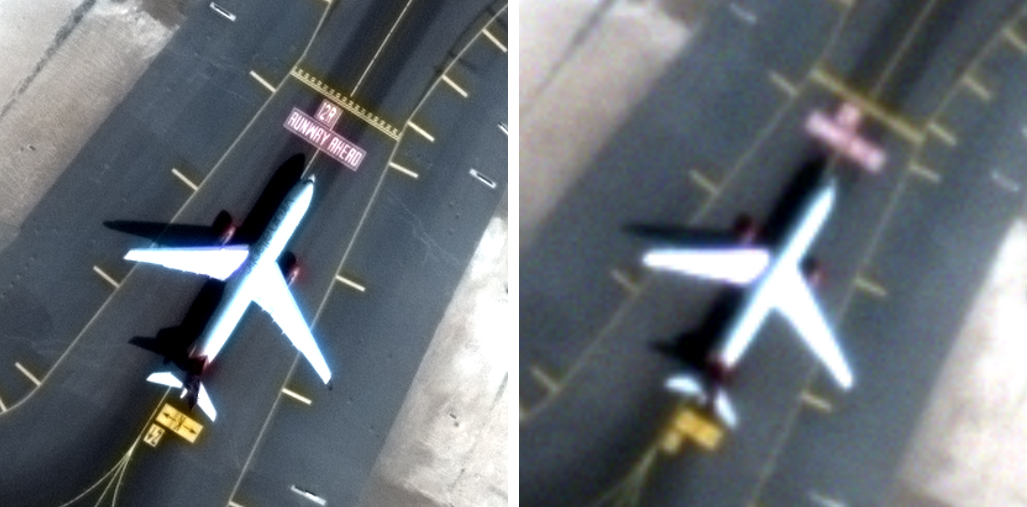

Back to the TV example – what happens when, despite knowing better, we attempt to “enhance that” by applying image enhancement to the 1 m image? Here’s what happens when we sharpen it once:

Back to the TV example – what happens when, despite knowing better, we attempt to “enhance that” by applying image enhancement to the 1 m image? Here’s what happens when we sharpen it once:

And again:

And again:

And once more:

And once more:

As you can see, an image is just like a book, a painting, or a master audio recording. The information that is captured at its creation is all that will be available a day, a month, or a decade later on. So when you hear someone say that a low-quality satellite image can show you the same thing as a high-quality image, feel free to point out that making it bigger doesn’t make it clearer.

As you can see, an image is just like a book, a painting, or a master audio recording. The information that is captured at its creation is all that will be available a day, a month, or a decade later on. So when you hear someone say that a low-quality satellite image can show you the same thing as a high-quality image, feel free to point out that making it bigger doesn’t make it clearer.