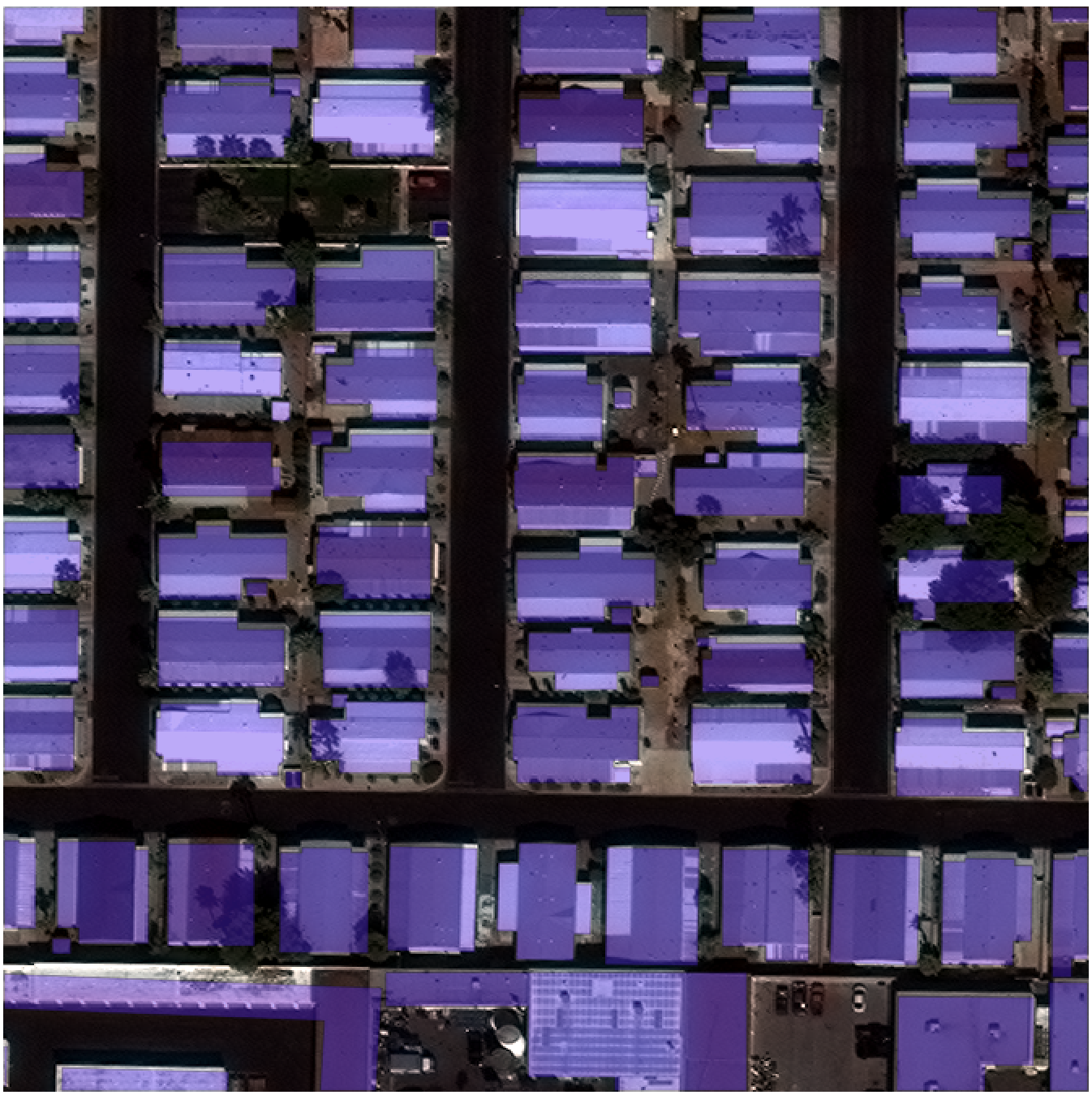

30cm WorldView-3 imagery and building footprints in Las Vegas, Nevada[/caption]

We utilized the SpaceNet on AWS open corpus of satellite imagery and geospatial data as the source of training data for The SpaceNet Challenge. The results of the first challenge were a step toward automation, though there remained room for improvement. The insights we gained from Round 1 of the competition informed the following changes for Round 2:

Improved training data - The quality of the building footprints has been improved using 30 cm image strips from WorldView-3 (versus a 50 cm mosaic) and more accurate and consistent techniques to derive the “ground truth” footprints. The footprints cover new areas in four geographically diverse cities to help developers improve algorithm training for different building types and construction materials.

Data formats - Multiple imagery formats (panchromatic, multi-spectral, RGB-pansharpen, multispectral-pansharpen) are provided to allow experimentation with different types of imagery for training. We are interested in evaluating the potential benefits of various image processing techniques and seeing how multi-spectral information can be used to improve performance.

External Data and Pretrained Models – The use of external data and pretrained models are explicitly allowed. The implementations for the Round 1 winning algorithms are described on CosmiQ Works’ blog. Our belief is that it makes sense to build from existing solutions, as many improvements in computer vision follow this trend.

Teams – In the spirit of encouraging more collaboration, we are allowing teams of up to five people. Organizations/companies can also participate but will not be eligible for prize money and but can place in the standings if they are willing to share their solution. Organizations must self-identify in the challenge forum.

Competition Length – The challenge is longer (nine weeks instead of three weeks) to allow developers more time to implement and improve their solutions. A longer challenge also opens the window for participation to a broader range of contestants.

New Prize Categories – Cash prizes will be awarded to the top three performers with the highest average score across all cities. The best score for individual cities and an early incentive prize will also be awarded. See here for details.

What’s next? Round 2 will conclude on May 23, and we expect to announce the winners about a week later. More SpaceNet Challenges are in the works for 2017, and we will announce details in the coming weeks. As a sneak preview, we are interested to explore automatically detecting indicators of activity and change – both human-related and naturally occurring.

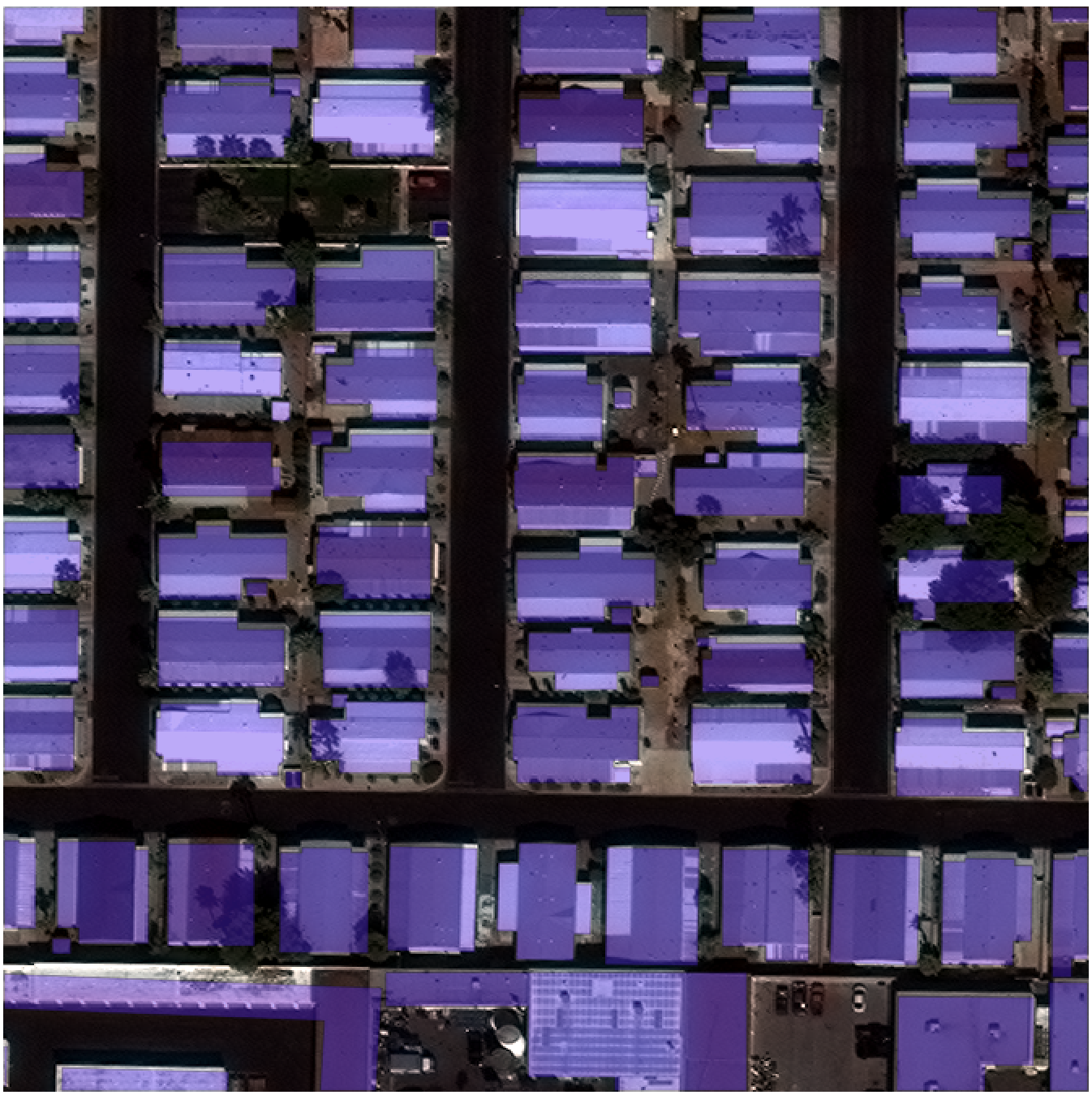

30cm WorldView-3 imagery and building footprints in Las Vegas, Nevada[/caption]

We utilized the SpaceNet on AWS open corpus of satellite imagery and geospatial data as the source of training data for The SpaceNet Challenge. The results of the first challenge were a step toward automation, though there remained room for improvement. The insights we gained from Round 1 of the competition informed the following changes for Round 2:

Improved training data - The quality of the building footprints has been improved using 30 cm image strips from WorldView-3 (versus a 50 cm mosaic) and more accurate and consistent techniques to derive the “ground truth” footprints. The footprints cover new areas in four geographically diverse cities to help developers improve algorithm training for different building types and construction materials.

Data formats - Multiple imagery formats (panchromatic, multi-spectral, RGB-pansharpen, multispectral-pansharpen) are provided to allow experimentation with different types of imagery for training. We are interested in evaluating the potential benefits of various image processing techniques and seeing how multi-spectral information can be used to improve performance.

External Data and Pretrained Models – The use of external data and pretrained models are explicitly allowed. The implementations for the Round 1 winning algorithms are described on CosmiQ Works’ blog. Our belief is that it makes sense to build from existing solutions, as many improvements in computer vision follow this trend.

Teams – In the spirit of encouraging more collaboration, we are allowing teams of up to five people. Organizations/companies can also participate but will not be eligible for prize money and but can place in the standings if they are willing to share their solution. Organizations must self-identify in the challenge forum.

Competition Length – The challenge is longer (nine weeks instead of three weeks) to allow developers more time to implement and improve their solutions. A longer challenge also opens the window for participation to a broader range of contestants.

New Prize Categories – Cash prizes will be awarded to the top three performers with the highest average score across all cities. The best score for individual cities and an early incentive prize will also be awarded. See here for details.

What’s next? Round 2 will conclude on May 23, and we expect to announce the winners about a week later. More SpaceNet Challenges are in the works for 2017, and we will announce details in the coming weeks. As a sneak preview, we are interested to explore automatically detecting indicators of activity and change – both human-related and naturally occurring.

30cm WorldView-3 imagery and building footprints in Las Vegas, Nevada[/caption]

We utilized the SpaceNet on AWS open corpus of satellite imagery and geospatial data as the source of training data for The SpaceNet Challenge. The results of the first challenge were a step toward automation, though there remained room for improvement. The insights we gained from Round 1 of the competition informed the following changes for Round 2:

Improved training data - The quality of the building footprints has been improved using 30 cm image strips from WorldView-3 (versus a 50 cm mosaic) and more accurate and consistent techniques to derive the “ground truth” footprints. The footprints cover new areas in four geographically diverse cities to help developers improve algorithm training for different building types and construction materials.

Data formats - Multiple imagery formats (panchromatic, multi-spectral, RGB-pansharpen, multispectral-pansharpen) are provided to allow experimentation with different types of imagery for training. We are interested in evaluating the potential benefits of various image processing techniques and seeing how multi-spectral information can be used to improve performance.

External Data and Pretrained Models – The use of external data and pretrained models are explicitly allowed. The implementations for the Round 1 winning algorithms are described on CosmiQ Works’ blog. Our belief is that it makes sense to build from existing solutions, as many improvements in computer vision follow this trend.

Teams – In the spirit of encouraging more collaboration, we are allowing teams of up to five people. Organizations/companies can also participate but will not be eligible for prize money and but can place in the standings if they are willing to share their solution. Organizations must self-identify in the challenge forum.

Competition Length – The challenge is longer (nine weeks instead of three weeks) to allow developers more time to implement and improve their solutions. A longer challenge also opens the window for participation to a broader range of contestants.

New Prize Categories – Cash prizes will be awarded to the top three performers with the highest average score across all cities. The best score for individual cities and an early incentive prize will also be awarded. See here for details.

What’s next? Round 2 will conclude on May 23, and we expect to announce the winners about a week later. More SpaceNet Challenges are in the works for 2017, and we will announce details in the coming weeks. As a sneak preview, we are interested to explore automatically detecting indicators of activity and change – both human-related and naturally occurring.

30cm WorldView-3 imagery and building footprints in Las Vegas, Nevada[/caption]

We utilized the SpaceNet on AWS open corpus of satellite imagery and geospatial data as the source of training data for The SpaceNet Challenge. The results of the first challenge were a step toward automation, though there remained room for improvement. The insights we gained from Round 1 of the competition informed the following changes for Round 2:

Improved training data - The quality of the building footprints has been improved using 30 cm image strips from WorldView-3 (versus a 50 cm mosaic) and more accurate and consistent techniques to derive the “ground truth” footprints. The footprints cover new areas in four geographically diverse cities to help developers improve algorithm training for different building types and construction materials.

Data formats - Multiple imagery formats (panchromatic, multi-spectral, RGB-pansharpen, multispectral-pansharpen) are provided to allow experimentation with different types of imagery for training. We are interested in evaluating the potential benefits of various image processing techniques and seeing how multi-spectral information can be used to improve performance.

External Data and Pretrained Models – The use of external data and pretrained models are explicitly allowed. The implementations for the Round 1 winning algorithms are described on CosmiQ Works’ blog. Our belief is that it makes sense to build from existing solutions, as many improvements in computer vision follow this trend.

Teams – In the spirit of encouraging more collaboration, we are allowing teams of up to five people. Organizations/companies can also participate but will not be eligible for prize money and but can place in the standings if they are willing to share their solution. Organizations must self-identify in the challenge forum.

Competition Length – The challenge is longer (nine weeks instead of three weeks) to allow developers more time to implement and improve their solutions. A longer challenge also opens the window for participation to a broader range of contestants.

New Prize Categories – Cash prizes will be awarded to the top three performers with the highest average score across all cities. The best score for individual cities and an early incentive prize will also be awarded. See here for details.

What’s next? Round 2 will conclude on May 23, and we expect to announce the winners about a week later. More SpaceNet Challenges are in the works for 2017, and we will announce details in the coming weeks. As a sneak preview, we are interested to explore automatically detecting indicators of activity and change – both human-related and naturally occurring.